Use case | Routing policy |

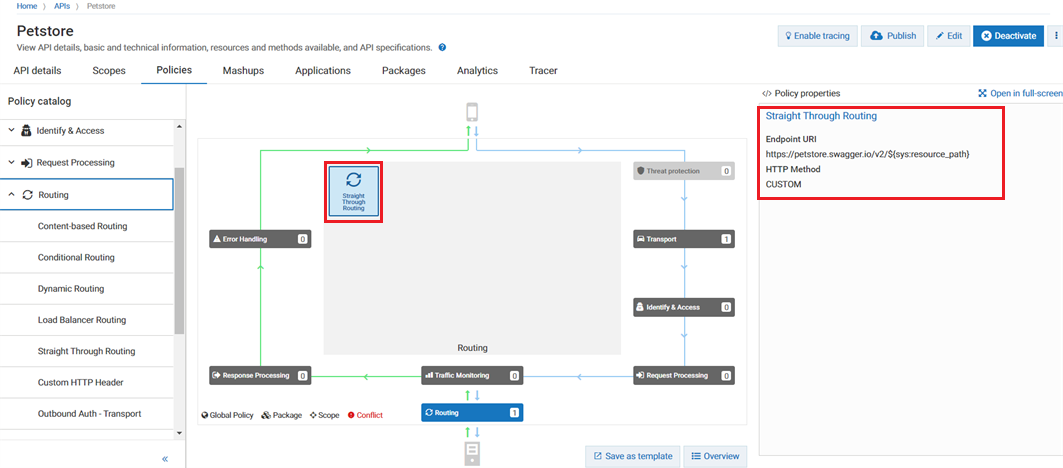

Objective: Expose existing backend services through an API with minimal added complexity. Scenario: Optimize traffic flow between network segments or zones without intermediate processing. Example: In fast networks like data centers, straight-through routing minimizes latency and overhead. This approach ideally suits applications that require low latency and high throughput, such as real-time video streaming, online gaming, or financial trading. | Utilize Straight Through Routing policy to ensure minimal latency and overhead. |

Objective: Direct incoming requests to different backend resources based on specified conditions or criteria. Scenario: Handling sensor data from various devices differently based on specific conditions. Example: In a smart agriculture system, sensors monitor soil moisture levels across different fields. Conditional routing directs data from sensors detecting low moisture levels to irrigation systems for immediate action. | Utilize Conditional Routing policy to handle data differently depending on predefined conditions. |

Objective: Effectively distribute incoming network traffic evenly across multiple servers or resources to optimize resource utilization and improve system performance. Scenario: Handling high volumes of incoming requests in a web hosting environment. Example: During peak shopping seasons, an e-commerce platform utilizes load balancer routing to evenly distribute traffic across servers, preventing overload and enhancing performance for a seamless user experience. | Utilize Load Balancer Routing policy to evenly distribute incoming requests across servers. |

Objective: Ensure secure communication between API Gateway and backend systems by including authentication credentials within transport headers. Scenario: Integration with legacy systems or third-party APIs requiring transport-level authentication. Example: An enterprise application interacts with a legacy backend protected by Basic Authentication over HTTPS. Configuring the Outbound Auth - Transport policy with the appropriate credentials enables API Gateway to seamlessly authenticate and authorize outgoing requests to the legacy system. | Utilize Outbound Auth – Transport policy to authenticate outgoing requests. |

Objective: Embed authentication credentials within the payload message of outgoing requests to satisfy requirements of the native API. Scenario: In instances where APIs mandate authentication credentials within the message payload. Example: An application needs to interact with a third-party service secured with SAML-based authentication. By configuring the Outbound Auth - Message policy with the necessary SAML credentials, API Gateway seamlessly embeds these credentials within the outgoing request’s payload, ensuring secure communication without added complexity. | Utilize Outbound Auth – Message policy to embed authentication credentials within the message payload for secure communication with APIs. |

Objective: Facilitate seamless communication between a legacy system utilizing a JMS queue and a modern RESTful API. Scenario: Modernizing service by offering a RESTful API for order placement while maintaining compatibility with legacy JMS-based communication. Example: A legacy system communicates through a JMS queue for order processing. The company aims to modernize its service by providing a RESTful API for order placement. JMS/AMQP routing policy acts as a bridge between the legacy system and the RESTful API, ensuring smooth transition and compatibility. | Utilize JMS/AMQP Routing policy to seamlessly route requests between the two environments. |